I restarted DAZ Studio, and at that point, I did see the preview render using PMC. It’s not what I had expected, but with any test, there’s information in here. Turns out this was a mistake: it took my system about 47 minutes to render the scene, and all I had to show for it was a 23k black picture (see inset on the right – I thought I’d post it here for a laugh). According to the Windows Task Manager, my GPU was using about 1% during this render, while my CPU was using about 20-30%.

This seemed a bit unusual, but perhaps there was a tick box I could have ticked and had forgotten. At first I thought it does not give me a real-time preview while it’s working. The third option takes the longest to render, and I’ve not had much look with it at all. Path Tracing, 500 samples – 02:20 Path Tracing, 1000 samples – 04:37 PMC While I don’t see any immediate definition changes, I find that Path Tracing renders slightly lighter than Direct Light, and more samples appear to make the image slightly brighter (most likely related to Global Illumination). To get a direct comparison to the Direct Light option, I’ve rendered the default and a pass with 500 samples. The second option came up with 1000 samples by default, and takes a bit longer to render per pass. I find that Diffuse gives a slightly more natural and brighter result, comparable to what we get with Path Tracing. We’re talking a 20 second variation here between the options.

The impact on render time is negligible, but as you can imagine Diffuse takes the longest, while off is the most efficient. The diffuse option adds Global Illumination = off Global Illumination = Diffuse Here are the other two variations for completion. Ambient Occlusion (default, as used above).Note that we have three options for Global Illumination: Direct Lighting, 500 samples – 01:53 Direct Light, 2000 samples – 07:15 Just to make sure, I took another pass with 4x the samples, but it still had grain. Without the denoiser grain is a bit much, with with it enabled it looks fine. It came up with 500 samples as a default, which is why I’ve rendered it as such. The default option, or rather the first one in the list is Direct Light. These were saved as 16 bit PNG files (click to enlarge). Until that point, minor grain is visible. The renders below were done at 2000×1500 with the built-in denoiser, which kicks in at the end of the image.

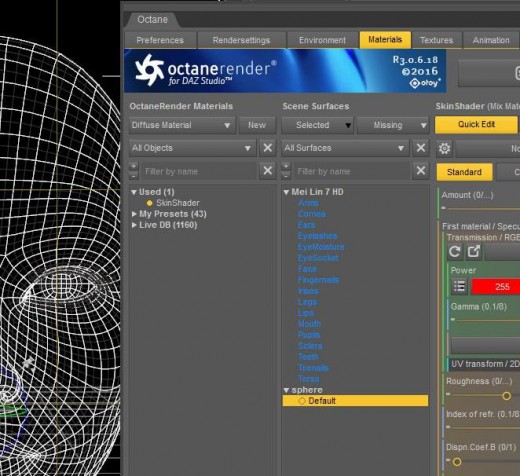

Let’s see if we can visually detect any differences. Rather than read the manual, which I’m sure would explain what the difference between each option, I did a few test renders of the scene we built. However, there appear to be four different types of options on how to achieve that. It’s easy to get brain overload with so many sliders! After some fiddling though, I discovered how to render the final image. Paint a portrait of a woman that showcases her beauty and artistry through a unique combination of vibrant colors and geometric shapes, capturing her essence and individuality in an imaginative and captivating composition.In a recent stream I got accustomed with some of the options of the Octane Plugin for DAZ Studio. Start with a basic geometric shape and use stable diffusion to create a complex, abstract design. Let’s get started! 15 Unique Stable Diffusion Prompts For Your Art 1. In this article, we’ve compiled a list of 15 stable diffusion prompts that you can use to create your own AI art. If you’re looking for something new and unique, then we suggest giving stable diffusion a try. Stable diffusion is a form of generative art that produces results that are unpredictable and often visually stunning. This new type of art is being enabled by “stable diffusion,” and it’s based on the idea that art can be created by algorithms. If you’re like most people, you probably think of art as something that’s created by an artist – someone who has a “vision” and an “inspiration.” But what if we told you that there’s a new type of art that’s being created by artificial intelligence (AI)?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed